Why Well-Intentioned People Hide Bad News Even When Lives Are at Stake

Status-hiding in life sciences isn't dishonesty. It's rational behavior in systems that punish candor. The real cost compounds from the task level to the boardroom.

I still remember the exact moment I decided to stay quiet.

We were fourteen months into a Phase II program for a once-weekly oral PARP inhibitor targeting BRCA1-mutated ovarian cancer. Solid mechanism. Clean Phase I safety data. The kind of program you join because you believe it could actually change outcomes for patients who have run out of options.

Then, on a Thursday morning in March, a competitor announced FDA breakthrough therapy designation for a combination regimen in the same patient population. Their Phase II data was strong. Suddenly our twelve-month enrollment timeline wasn't just ambitious. It was potentially irrelevant.

All hell broke loose. Within forty-eight hours, leadership convened an emergency portfolio review. The chief medical officer wanted the enrollment timeline pulled forward by four months. Commercial was already revising peak sales projections. The head of regulatory affairs was on calls with the FDA trying to understand whether our trial design still held up against the new competitive landscape.

Nobody asked the clinical operations team whether a four-month acceleration was feasible. We were told to make it happen.

I knew it wasn't going to work. Our site activation rate in Eastern Europe was running 30% behind projections. Two of our top-enrolling sites in South Korea had flagged IRB complications that would take weeks to resolve. The biostatistics team had quietly told me that the interim analysis would need 288 evaluable patients, not the 240 we'd originally powered for, because the competitor's data had shifted the expected effect size. None of this had made it into the program slides.

The following Tuesday, I sat in the weekly status meeting. Twelve people around the table. The program lead opened her laptop, pulled up the RAG dashboard, and asked the room: "Where are we on enrollment?"

Silence. The kind of silence where everyone is looking at the same slide and nobody wants to go first.

The clinical supply lead said his piece was on track. Manufacturing confirmed drug product availability. The data management lead reported case report forms were clean. Each person reported their piece as green.

My turn. I had a slide showing site activation rates, the IRB delays, the biostatistics conversation. All of it real. All of it documented.

I reported amber. "Managing some risks. The team is working it."

Everyone in that room knew what "the team is working it" meant. It meant we were behind and nobody was going to say it. Not here. Not in front of the VP who had just told the executive committee we were on track for the Q3 interim.

I drove home that evening replaying the meeting in my head. I wasn't angry at anyone. I was angry at myself. I had the data. I had the evidence. I had a responsibility to that program and, ultimately, to the patients it was designed to help.

And I chose amber.

I chose it because I remembered what happened two years earlier, when our flagship CNS program hit a Phase III enrollment wall and the program director flagged it honestly at the portfolio review. Within a month, she was reassigned to a smaller program. Within six, she left the company. Nobody said it was because she raised the alarm. Nobody had to.

That memory was louder than any data on my slide.

I've spent a lot of years since then thinking about that meeting. Not because it was unusual, but because it wasn't. It plays out every week, in conference rooms and on video calls, across this industry. Different molecules, different indications, different companies. Same silence. Same calculation. Same outcome.

And I've come to believe the problem isn't cowardice. It isn't dishonesty. It isn't even poor leadership.

The problem is that we're human. And humans are wired, at a level deeper than any corporate training can reach, to protect their standing in the group. Losing face isn't a minor discomfort. It's a threat the brain processes the same way it processes physical danger. When the social cost of truth is high and the organizational cost of silence is deferred, the math is simple. And everyone in that conference room was doing the same math. As we explored in The Buffer Illusion, coordination creates exposure, and exposure is personally dangerous. That danger is what keeps the silence in place.

This article is about that math. Where it comes from. How it compounds. What it costs our industry. And what the organizations that have found ways to change the equation actually did differently.

Twelve People Knew. Nobody Spoke.

Here's what happened to my amber.

The program lead took the team's status inputs and assembled the weekly deck for the VP. My amber was the only non-green item. She softened it. Not maliciously. She added context: "Site activation delays in two regions being actively managed. Mitigation plan in development. No impact to primary timeline expected at this time."

That last sentence was fiction. She knew it. I knew it. But it was the kind of fiction that buys you two weeks, and two weeks might be enough to show progress. Might.

The VP took the program lead's deck and rolled it into the portfolio summary for the executive committee. Sixteen programs on one slide. My program showed green with a footnote about "regional enrollment variance under active management." By the time the words left the conference room where I'd sat with a knot in my stomach, they'd traveled three layers up and lost every sharp edge. The IRB delays were gone. The biostatistics conversation was gone. The 30% site activation gap was gone. What remained was a green dot and a footnote nobody would read. The plan had become what The Coordination Fallacy described: a compliance artifact, not a working tool. Two realities, side by side. The one in the slides and the one the team was living.

I've watched this happen so many times since then that I've started to think of it as information half-life. Not the facts themselves, but the urgency. A genuine alarm at the bench level becomes a concern at the program level, becomes a risk being managed at the portfolio level, becomes a footnote at the executive level, becomes invisible at the board level. The content erodes at every handoff. But the urgency erodes faster. By the time the signal reaches someone with the authority to act, there's no heat left in it. No reason to prioritize it over the fourteen other footnotes on the same slide.

And here's the part that haunts me about that Tuesday meeting. I wasn't the only one holding back. I found out later, weeks later, over drinks after a conference, that at least three other people at that table had concerns they hadn't raised. The clinical supply lead was worried about API availability from our secondary manufacturer. The regulatory affairs liaison had picked up signals that the FDA might request additional pharmacokinetic data. The biostatistician had been running scenarios that showed our interim futility boundary was tighter than anyone realized.

Each of them had done the same calculation I did. Each of them assumed they were the only one with doubts. So each of them stayed quiet, reinforced by the silence of everyone else.

A room full of experts, each privately holding a piece of the real picture, each assuming the group's silence meant the group was confident. The collective silence wasn't consensus. It was a hall of mirrors.

The program ran for another four months before the enrollment gap became impossible to hide. By then, the cost of the delay had compounded from a recoverable course correction into a $40 million budget overrun and an eight-month timeline extension. The patients in the trial continued on protocol. The patients not yet enrolled waited longer. The competitor's program moved ahead.

Four months. That's what the silence cost.

Green on the Outside

Project managers have a term for this. They call them watermelon projects. Green on the outside, red all the way through. Slice one open and you find the problems everyone sensed but nobody surfaced. The term exists because the pattern is so common it needed a name.

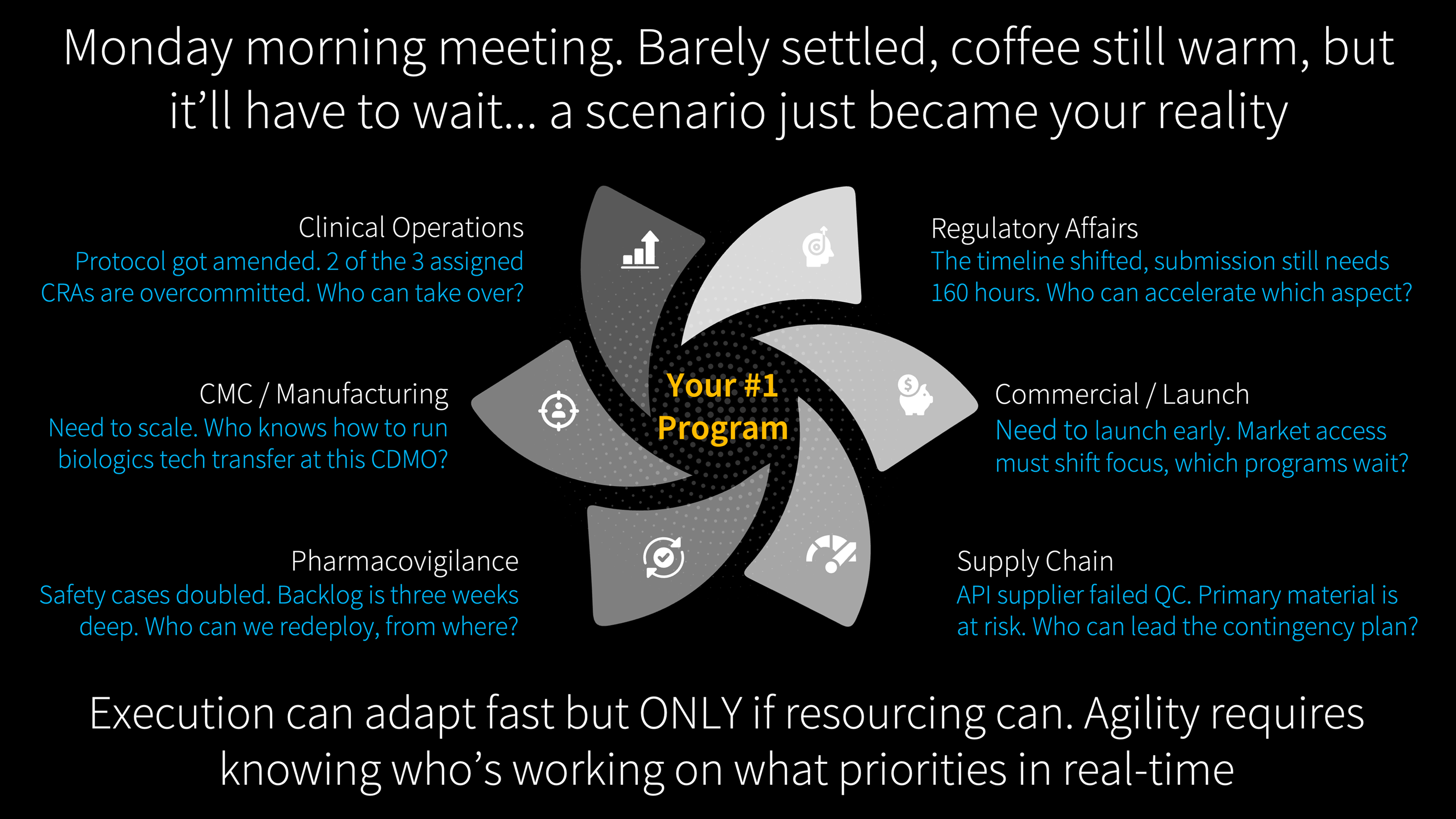

What makes it worth paying attention to isn't the individual watermelon. It's what happens when an entire portfolio is full of them. When the cascade I described in one program is running simultaneously across ten, twenty, fifty programs, each with its own amber-reported-as-green, its own footnotes that dissolve into invisibility, its own team members holding back because they remember what happened to the last person who spoke up. These are the invisible dependencies that drive portfolio trajectory. Not the science. Not the regulatory strategy. The human choices made in Tuesday meetings.

At that scale, the boardroom isn't looking at a portfolio. It's looking at a painting of a portfolio. And making resource allocation decisions, kill decisions, launch timing decisions, billion-dollar partnering decisions, based on the painting.

Leadership reviews one reality. Execution navigates another. The gap is where medicines stall.

We saw the cost of that pattern in public throughout 2025 and into 2026, at a scale that makes the stakes impossible to ignore.

Novo Nordisk entered 2024 as Europe's most valuable public company, worth over $600 billion. Over the next eighteen months, a series of setbacks eroded more than two-thirds of that value. Initial CagriSema data in late 2024 missed the company's own target of 25% weight loss, costing €90 billion in a single session. The ocedurenone kidney drug acquisition turned into an $817 million write-off. The Alzheimer's trial failed in November 2025. Then the CagriSema head-to-head results against Eli Lilly's Zepbound missed the non-inferiority endpoint on February 23, 2026, and the stock fell another 16%.

To their credit, Novo Nordisk adjusted. A CEO was replaced. Nine thousand jobs were restructured. Board members were changed. A new high-dose CagriSema trial was planned. These are not the actions of an organization ignoring reality. They are the actions of an organization responding to it, forcefully.

But here's the question I keep coming back to: did it have to be this painful?

Every one of those macro-corrections, the CEO change, the restructuring, the board overhaul, represents a system that calibrated late and at enormous cost. What would it have looked like if the signals had been visible and actionable earlier, not at the board level but at the task level, the milestone level, the decision level? If dozens of small course corrections had happened continuously across programs and functions rather than accumulating until they required emergency intervention?

I don't know the answer for Novo specifically. Nobody outside the organization can. But I recognize the pattern because I've lived inside it. The cascade I described in my Tuesday meeting, where information loses urgency at every handoff until the only correction left is a dramatic one, that's not unique to one company. It's how our entire industry operates. Deloitte's latest data puts the cost of developing a single drug at $2.23 billion. At those numbers, the difference between continuous micro-calibration and periodic crisis response isn't academic. It's the difference between a program that adapts in weeks and one that takes months to acknowledge what the people closest to the work already knew.

The question isn't whether operations get enough attention. The question is whether they get the right kind of attention, at the right altitude, soon enough to matter. Across most of our industry, the honest answer is no. Not because leaders don't care, but because the systems we've built don't surface what matters until it's too late to act on it quietly.

We Built a Professional Vocabulary for It

What fascinates me is that we've built an entire professional vocabulary around it. Managing up. Managing expectations. Framing the narrative. Controlling the message. These aren't dirty words. They're career skills, and in many contexts they're legitimate ones. Translating complex operational data for a senior audience who oversees sixteen programs is real work. But there's a line between translation and filtration, and most of us have crossed it so many times we've stopped noticing where it is.

Nobody calls it dishonesty. They call it executive communication.

The first time I softened a status report, it felt wrong. A small betrayal of what I knew to be true. The fifth time, it was just part of the process. By the twentieth, I didn't even notice I was doing it. That's how normalization works. Not through a single decision to start hiding information, but through a thousand small accommodations until the gap between what you report and what you know becomes part of the background. Invisible. Normal. The way things are done.

And here's the part that keeps me up at night. We've spent the last decade investing in better visibility tools. Dashboards. Portfolio analytics platforms. Control towers. Real-time status aggregation. The theory is sound: if we can see everything, we can manage everything. But every one of those systems is a display layer. They visualize whatever someone entered. If I entered amber when I knew it was red, the dashboard doesn't show the truth. It shows my amber. In high resolution. With beautiful formatting. The more sophisticated the platform, the more authoritative the fiction looks. A red status scrawled on a whiteboard invites questions. A green dot on a million-dollar analytics dashboard looks like a fact.

Harvard's Ethan Bernstein studied this and found something counterintuitive: in some environments, increased transparency actually reduces honesty because people feel more watched, more exposed, more accountable for every keystroke. More reporting infrastructure can make the problem worse, not better, because every entry is timestamped, searchable, and attributable. The tool that was supposed to surface reality becomes another reason to manage what you enter.

This runs deep. Ray Dalio built Bridgewater Associates on radical transparency because he understood that Wall Street's default operating system runs on managed truth. He had to engineer an entirely new social architecture to override what comes naturally.

And the economics of overriding it are overwhelmingly favorable. The cost of hearing bad news at month three of a program is a conversation and a course correction. The cost of hearing the same news at month eighteen is a write-off. At $2.23 billion per asset, every month of delayed truth compounds. The math overwhelmingly favors early candor. But the social architecture overwhelmingly favors late disclosure. The economics and the psychology are pointing in opposite directions.

And it's the psychology that wins. Every time.

Someone Has to Go First

I want to be careful here because it would be easy to turn this into a finger-pointing exercise. Leadership isn't transparent enough. Middle management filters too much. Teams don't speak up. Everyone blaming the layer above or below.

But that misses the point. The VP who reacts badly to bad news is doing the same math as the PM who hides it. She has a board to report to. A CEO who wants clean narratives. Investors who punish uncertainty. She learned, the same way I learned, that the professional cost of delivering unwelcome truth is immediate and personal, while the organizational cost of deferring it is diffuse and delayed. She's not the villain in this story. She's another human doing human math.

So the question isn't who needs to change. It's what would need to be true for the math to come out differently.

I've watched a handful of organizations get closer to an answer. Not perfect. Closer.

During COVID, Pfizer scrapped its multi-layered governance process and had senior leaders meet twice a week to make all critical decisions directly. Stop decisions that previously required four committees and six weeks of escalation happened in a single conversation. A vaccine went from concept to authorization in eleven months. The cascade collapsed because the architecture changed: the distance between ground truth and decision-maker shrank to almost nothing.

But it was a wartime exception. One program, one objective, unlimited urgency. Once the crisis passed, the old structures reasserted themselves. That pattern isn't unique to Pfizer. Every experiment in flattening hierarchy, from Spotify's squads to Zappos's holacracy, has eventually reverted as the organization scaled or the acute pressure faded. The proof of concept exists. The proof of sustainability doesn't. Not yet.

Amy Edmondson at Harvard has spent decades studying teams in healthcare and pharma and found something that should give us hope. The highest-performing teams in her research weren't the ones that made fewer mistakes. They were the ones that reported more of them. Not because they were worse at their jobs, but because they operated in environments where surfacing a problem early was treated as a contribution, not a career risk. High psychological safety combined with high accountability. That's the zone where truth moves freely and performance follows.

One reframe I've carried with me since I first encountered it in the high-reliability literature: the difference between organizations that punish failure and organizations that punish surprise. You can't prevent failure in drug development. Ninety percent of clinical programs fail. That's the biology. But you can build an organization where surprises are rare, because the people closest to the work feel safe enough to share what they see when they see it. "Don't fail" is impossible. "Don't surprise us" is achievable. And it changes everything about what people are willing to say in that Tuesday meeting.

None of this is easy. You don't override two hundred thousand years of social wiring with a town hall and a new set of values posters. But you can design systems and cultures where the path of least resistance is honesty rather than silence. Where portfolio intelligence surfaces reality directly from the work itself, reducing the number of human translation points where truth gets softened. Where red is treated as information rather than indictment. How to build that, from the governance structures that enable speed to the portfolio analytics that surface what's real to the leadership courage it demands, is where this conversation goes next.

I think about that ovarian cancer program sometimes. About the women who enrolled in the trial, and the ones who didn't get the chance to because we ran out of time. Eight months. That's how long the silence added.

I wonder what would have happened if I'd said red.

References

- CNBC: "Novo Nordisk stock falls after CagriSema trial fails to match weight loss of Eli Lilly's Zepbound" (February 23, 2026). cnbc.com

- PharmExec: "Novo Nordisk's CagriSema Falls Short of 25% Weight Loss Target" (February 23, 2026). pharmexec.com

- CNBC: "Novo Nordisk shares plunge after Alzheimer's drug trial fails" (November 24, 2025). cnbc.com

- Euronews: "Novo Nordisk shares plunge on the back of disappointing trial results" (December 23, 2024). euronews.com

- BioPharma Dive: "Novo nixes trial of blood pressure drug it bought in $1.3B deal" (June 26, 2024). biopharmadive.com

- Deloitte: "Measuring the Return from Pharmaceutical Innovation 2024" (March 2025). deloitte.com

- Deloitte / Bernstein: "The Transparency Paradox: Transparency in the Workplace" (2024). deloitte.com

- O.C. Tanner: "Transparency Revisited," 2026 Global Culture Report. octanner.com

- NeuroLeadership Institute: "Creating Psychological Safety for Improved Performance" (March 2025). neuroleadership.com

- McKinsey: "Strengthening the R&D Operating Model for Pharmaceutical Companies" (January 2025). mckinsey.com

- McKinsey: "Simplification for Success: Rewiring the Biopharma Operating Model" (March 2025). mckinsey.com

Further Reading

- The Buffer Illusion: When Faster Approvals Expose Slower Organizations. On why coordination creates exposure, and why exposure is personally dangerous.

- The Coordination Fallacy: Two Realities in Drug Development. On how plans become compliance artifacts rather than working tools.

- Dark Matter in Drug Development: The Invisible Dependencies Driving Your Portfolio. On the cross-program dependencies that no project plan captures.

- Four Companies Wearing One Logo: The Architecture of Disconnection. On why clinical, regulatory, CMC, and commercial operate as separate companies.

About Me

I'm Andy, pharmacist, MIT engineer, 25 years in life sciences operations. I started Unipr because I watched too many medicines get delayed by operational barriers, not science failures. We support 100+ pipeline programs at top pharma and biotech across 30+ countries.

This article is personal. The meeting I described is composited from many meetings across many years, but the dynamics are real and the silence is ongoing. If you recognize the pattern, I'd welcome the conversation. Learn more at unipr.com.

Compliance

Unipr is built on trust, privacy, and enterprise-grade compliance. We never train our models on your data.

Start Building Today

Log in or create a free account to scope, build, map, compare, and enrich your projects with Planner.