Estimating Is Not Calculating: The Resource Precision Gap Between Planning and Execution

Parametric planning multiplies ratios against scale. Task-level planning sums the actual work. One is an estimate. The other a calculation. Life sciences need both.

On January 9, 2026, the FDA published draft guidance endorsing Bayesian methodology in clinical trials: probability-based adaptations, interim decision-making, dose selection that evolves as evidence accumulates. Five weeks later, the EMA published its overview of comments received on the ICH E20 guideline, completing a public consultation cycle that began in June 2025. Regulators across the US, EU, and Japan are now formally harmonizing expectations for clinical trials that learn as they go.

This is good news for patients. Adaptive designs concentrate resources where evidence points and get better answers faster. For an industry where development timelines routinely stretch beyond a decade and costs exceed $2 billion per therapy, the ability to adapt mid-execution is an operational imperative, not just a methodological preference.

But what makes this development consequential beyond clinical operations is the resource ripple it creates across the entire organization.

When a trial adapts, when a dose arm drops or enrollment criteria shift or a new cohort is added, the clinical work changes. So does the regulatory work: amended submissions, revised labeling strategies, updated agency interaction plans. So does the CMC work: adjusted batch requirements, recalibrated supply forecasts, revised stability protocols. So does the commercial work: updated market models, revised launch assumptions, recalibrated pricing analytics.

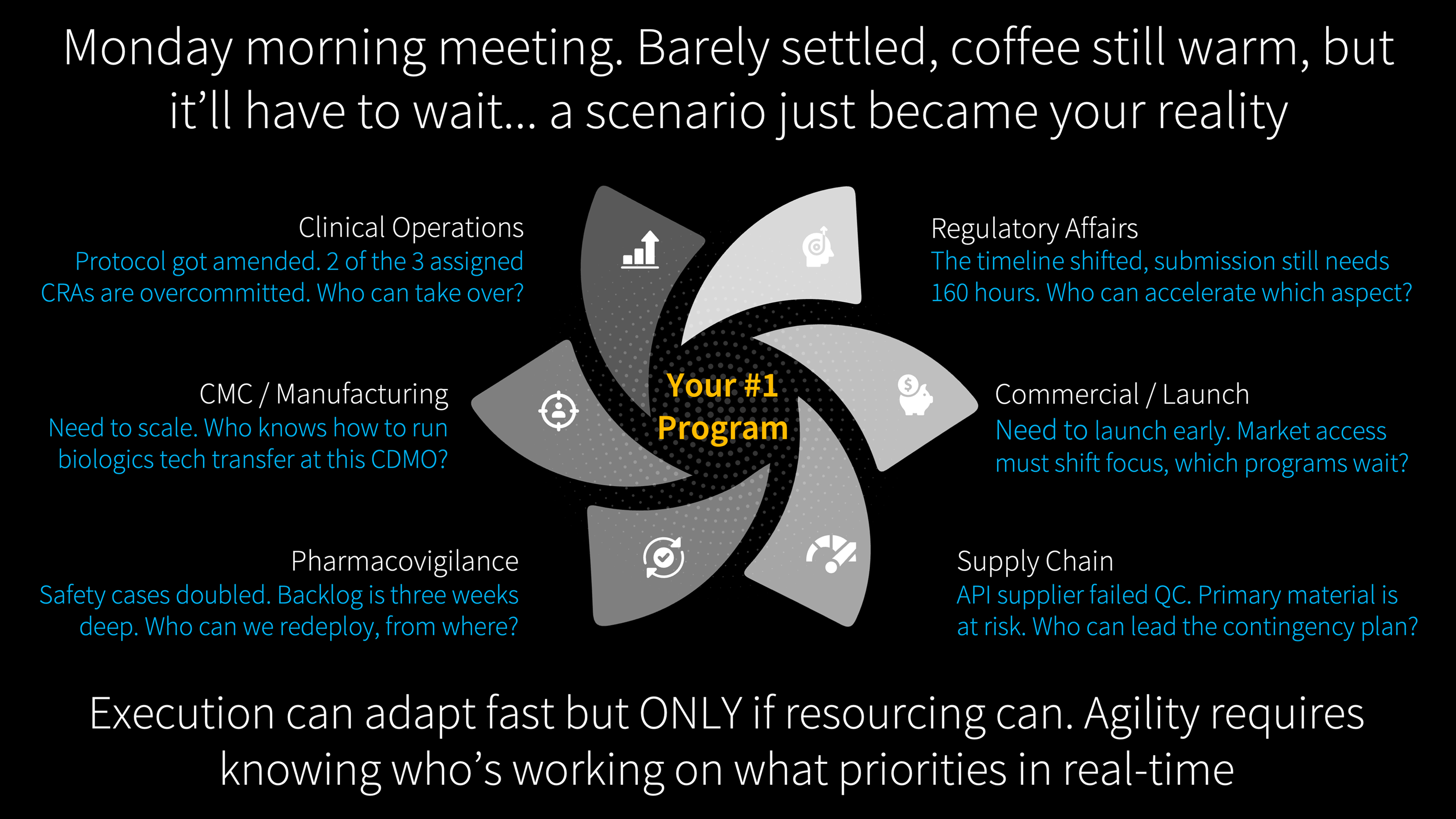

Adaptive trial design is the most visible expression of a broader reality. Across every function, circumstances shift faster than annual planning cycles can absorb. Regulatory agencies issue surprise requirements. Competitors launch ahead of schedule. Supply chains fracture under tariff pressure. Protocol amendments reshape the work mid-execution. And in every case, when the plan changes on Monday morning, the first question is always: who is available to do this work, and what are they currently committed to?

That answer, across most of the industry, takes weeks to assemble. Meetings are convened, spreadsheets are updated, phone calls are made. By the time the picture is clear, the moment for rapid response has passed.

It does not have to be this way.

The Speed of Decisions vs. the Speed of Reassignment

Circumstances in drug development evolve rapidly. They always have. What has changed is this: execution plans can now evolve equally fast to meet them, if the people driving those plans can be rapidly reassigned and matched to the new work that circumstances demand.

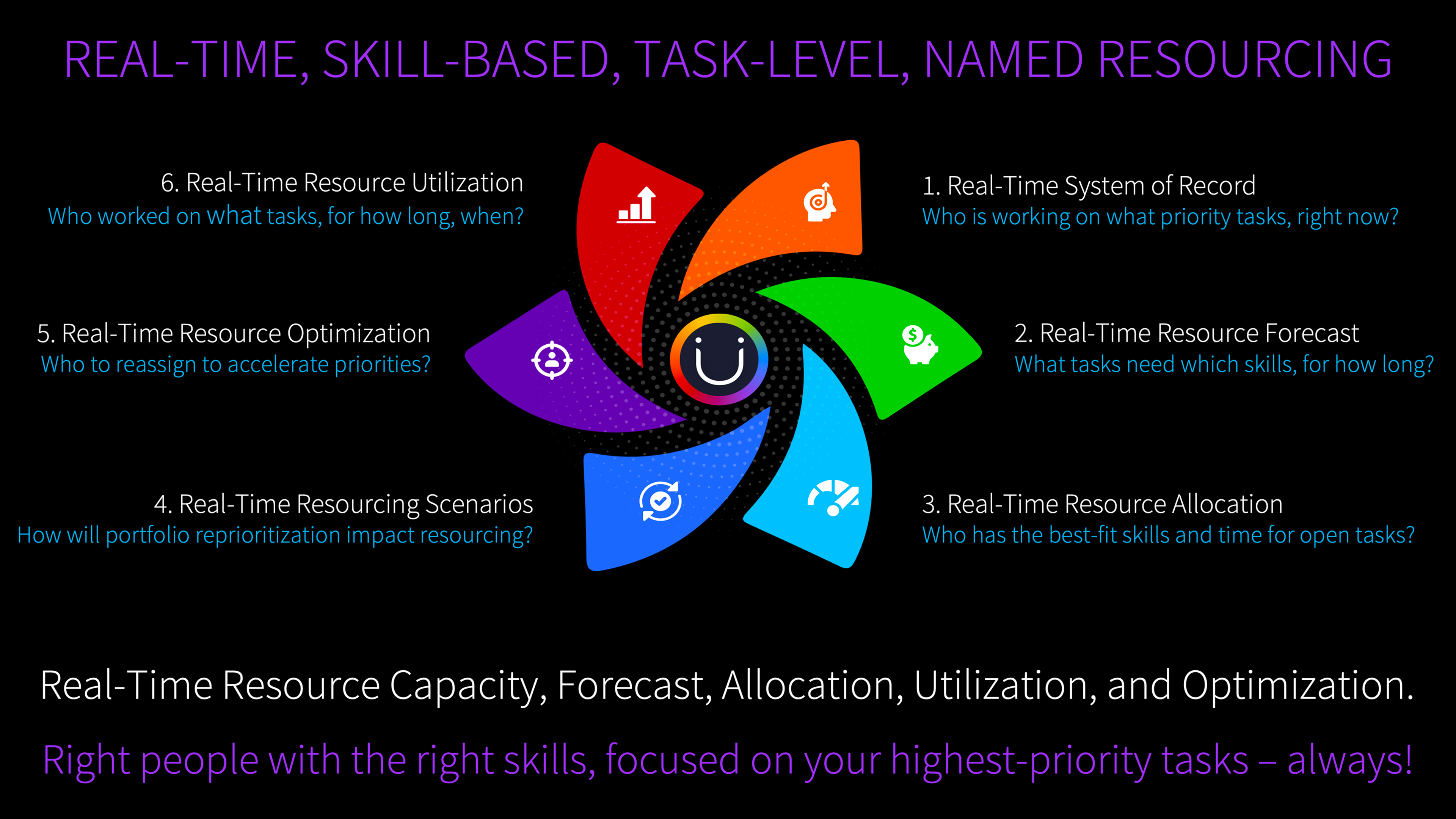

That "if" is the bottleneck. Not strategy. Not scenario planning. Not adaptive trial design. The bottleneck is the resource layer: the ability to see, in real time, who is doing what work, at what level of commitment, and what happens across the portfolio when they need to move.

Every VP of portfolio management, R&D operations, or program leadership has lived through some version of the following.

A trial adapts mid-execution. A dose arm drops based on interim analysis. Monitoring visits decrease for the discontinued arm but increase for the expanded cohort. The biostatistics team's interim analysis workload stays constant (it is a fixed block of effort regardless of the timeline shift), but the data management team's query resolution volume just changed because the patient population shifted. Regulatory needs to amend submissions across two agencies. CMC needs revised batch forecasts. Each function absorbs a resource ripple, and every person affected is simultaneously committed to other programs.

A regulatory agency issues a surprise post-marketing commitment that was not in the plan. New work appears. People need to be reassigned. From which programs? At what cost to the timelines they leave behind?

A competitor launches first and the commercial team needs to accelerate. Medical affairs, market access, field operations all need to move faster. With the same people who are currently committed to existing launch preparations for other products.

A CDMO partner announces a two-month delay. Tech transfer timelines shift. QC testing schedules cascade. Supply chain needs to activate the dual-source contingency plan. The people who execute that contingency are currently executing something else.

In each case, the ability to respond depends on the same thing: real-time visibility into who is doing what, and what happens downstream when they move. With that visibility, execution adapts at the speed of decisions. Without it, weeks are lost to the mechanics of figuring out what should take hours.

The Altitude That Serves and the Altitude That Doesn't

As People Are Not Your Greatest Asset diagnosed, demand generation in pharma starts at the wrong altitude. That article examined how the distorted demand signal feeds proxy-based allocation. This article addresses a different question: what would the demand signal look like if it were built from the work itself, and why does that matter more now than it did five years ago?

Portfolio-level estimation answers an essential question: given the portfolio we plan to execute, how many people do we need, by function, by quarter? The algorithms that answer it have been calibrated over decades. At the portfolio level, they work. Some organizations report functional-level forecast accuracy within 10%, which is impressive. But every parametric model carries a hidden assumption: it is derived from averages. When a specific program diverges from the average, there is no mechanism inside the algorithm to flag the divergence. The number arrives, gets endorsed, and becomes the plan. The gap between functional-level accuracy and program-level precision is where resource agility either exists or does not.

What makes this gap consequential now is that it extends far beyond R&D. Even within R&D, only a fraction of the industry uses enterprise portfolio management tools for resource forecasting. The rest of the organization, regulatory affairs, CMC, commercial, medical affairs, market access, supply chain, pharmacovigilance, uses the exact same top-down estimation approach in custom-built spreadsheets and project trackers. Different tools, same methodology, same inability to flex when circumstances change at the program level. And as operations become more adaptive, more parallel, and more scenario-driven across all of these functions, the resolution gap means programs get staffed based on templates that no longer match the work being done.

As World Pharma Today reported in December 2025, the industry recognizes that neither of the two dominant resource planning approaches adequately addresses modern portfolio complexity. The aggregate may be right. The distribution across programs is often wrong. And you cannot see the distribution from the altitude parametric estimation operates at.

288 Hours Is 288 Hours

One distinction, once visible, changes how you think about resource planning entirely. It applies to every function, not just clinical operations.

Not all work responds to change the same way. A biostatistics team needs 288 hours to complete an interim analysis. Whether the study runs on schedule or gets delayed by two months, the work is the same. A regulatory team needs 160 hours to prepare a Type II variation for EMA. A medical affairs team needs 120 hours to develop a publication manuscript. A market access team needs 80 hours per country to prepare a health technology assessment submission. The effort is fixed. If a resource model recalculates FTEs upward when the timeline extends, it overstates demand for all of these. The model should know that effort-constrained work carries a fixed number of hours regardless of calendar position.

A medical monitor reviews safety data four hours per day for the duration of active enrollment. A quality assurance reviewer audits manufacturing batch records at a steady daily rate. A pharmacovigilance team conducts ongoing signal detection at a consistent daily cadence. The daily commitment is fixed. What changes is how many days the work runs. These are rate-constrained: the daily intensity and the team size are constant. Duration is the only variable.

Monitoring visits scale with patients enrolled: one visit per site per eight patients. Data cleaning scales with eCRF pages and query volume. Safety case reporting scales with adverse events. Commercial medical information requests scale with prescriber adoption after launch. Supply chain demand recalibration scales with the number of markets entering the launch sequence. Manufacturing changeovers scale with the number of product batches on the production schedule. These tasks do not scale with calendar time or fixed effort. They scale with the volume of operational activity that generates the work. When enrollment accelerates, or launch uptake exceeds forecast, or a safety signal increases reporting volume, resource demand changes in ways no annual template can detect in real time. These are throughput-driven tasks.

And some work is simply time-constrained. A regulatory submission window is 30 days. A pre-approval inspection has a fixed window. A product launch date is locked to a commercial calendar. When more work needs to happen within a fixed window, the only levers are more people or higher daily intensity.

Effort-constrained, rate-constrained, throughput-driven, time-constrained. These distinctions are not new to project management (the PMBOK has recognized fixed-work, fixed-duration, and fixed-units for decades). What is new is their operational consequence in life sciences at a moment when adaptive designs, parallel regulatory strategies, and scenario-based execution make the distinction material. When a trial adapts and the timeline shifts, knowing whether a task is effort-constrained or throughput-driven determines whether the resource model responds accurately or introduces a fiction.

The resource model that treats an interim analysis the same as a monitoring visit will get one of them wrong. The question is which one, and how long before anyone notices.

A critical point: these classifications are human judgments, not algorithmic outputs. When a planner determines that an interim analysis is effort-constrained, that is a deliberate decision: this work carries 288 hours regardless of what happens to the timeline. When a planner determines that monitoring visits are throughput-driven, that is another deliberate decision: this work scales with enrollment volume. The system does not make these calls. People do. What the system does is calculate the consequences of those judgments across the portfolio in real time, so the impact of a change is visible in hours rather than weeks.

Two Questions, One Architecture

Headcount Is Not Capability examined why adding people does not solve structural dysfunction. Its companion examined how allocation breaks down through proxies. This article addresses a prior question: the demand signal itself.

Parametric resource planning multiplies ratios against scale. Bottom-up resource planning sums the actual work. The first produces an estimate. The second produces a calculation. Both are useful. But only one can tell you that Study A's database lock and Study B's interim analysis need the same biostatistician in the same month.

There are two questions, not one. Both are worth answering. Together they are more powerful than either alone.

Question one: "How many people does the portfolio need, by role, by quarter?" Parametric estimation. The CFO's question. Answered rigorously by algorithms calibrated to decades of execution data.

Question two: "What specific work needs to happen, by whom, in which window, and how does that change when circumstances shift?" Task-level calculation. The operating question. The one that currently gets answered through spreadsheets, institutional knowledge, and resource managers who carry the complexity in their heads.

"By whom" is where the precision lives, and it is the territory that People Are Not Your Greatest Asset examined in depth: the gap between matching by availability and matching by fit. What this article adds is the structural prerequisite. Skill-based matching requires knowing what the work actually is at the task level. "We need a regulatory affairs specialist" is a role-level request that any resource manager can fill from a roster. "We need someone who has filed Type II variations with EMA and has availability in Q3" is a task-level request that requires visibility into both the work and the people. Most organizations match by availability because matching by fit requires visibility they do not yet have. When the work structure exists at the task level, that visibility becomes possible.

Most organizations answer the first with precision and the second informally. As portfolios grow more complex and operations more dynamic, the informal answers create friction that stays invisible until it manifests: an overcommitted specialist who misses a deadline, contention between programs that surfaces as a crisis rather than a scheduling decision, an amendment whose resource impact takes three weeks and a cross-functional meeting to quantify.

The demand signal needs to originate from the work itself, not from templates applied to the work. When you build a work breakdown structure based on the actual scope, complexity, and risk profile of a specific program and derive resource demand from that structure, you get a calculation, not an estimate. Compare that calculation against the top-down forecast. When the parametric algorithm says 30 biostatistics FTEs in Q3 and the task-level calculation says 38, that gap is worth a conversation. It does not mean the algorithm is wrong. It means there is a discrepancy that deserves investigation.

And then close the loop. After six months of actuals, you know which signal was closer. That learning improves both: the parametric model gets better calibration data, and the task-level model gets validated duration and effort assumptions. Without actuals flowing back, the bottom-up calculation is a one-time planning exercise. With actuals, it becomes a learning system.

This is where most organizations hit a practical wall. The actuals data feeding their planning models is suspect because the people recording time are checking boxes, not representing reality. Everyone in the industry knows this. Timesheets capture compliance, not truth. The opportunity is to invert the burden: instead of asking people to recall and enter time from scratch, present them with AI-suggested allocations based on their calendar, their assignments, and their task history, and let them correct. People will correct suggestions far more readily than they will fill in blank fields. The accuracy of the utilization data goes up, and with it, the accuracy of every forecast and allocation that depends on it.

Portfolio estimation for headcount planning. Task-level calculation for allocation accuracy. The first gives you how many. The second gives you who, for what, and when. One without the other leaves a gap. Together, they close it.

What Changes When You Can See the Work

Return to Monday morning. The protocol amendment lands. Instead of convening a meeting and opening a spreadsheet, imagine this:

The amended monitoring schedule recalculates automatically because the model knows monitoring visits are throughput-driven and the enrollment projection just changed. The biostatistics team's interim analysis allocation stays constant because the model knows that work is effort-constrained: 288 hours regardless of the new timeline. The regulatory team's submission amendment appears as a discrete 160-hour work package with a fixed deadline. The CMC team's revised batch forecast triggers a supply chain recalibration that scales with the number of markets affected.

The resource cost of the amendment is visible across every function before the amendment is approved. The cross-program impact, the fact that the regulatory writer needed for this amendment is also committed to Program B's NDA module next month, surfaces as a scheduling decision rather than a crisis discovered at the deadline.

This is what Dark Matter in Drug Development described as invisible portfolio dependencies: contention for shared resources that no individual project plan captures. At parametric resolution, both programs look adequately resourced. The conflict only becomes visible at the task level.

Now scale this beyond clinical. A competitor launches ahead of schedule and the commercial timeline compresses: the model shows which medical affairs, market access, and field operations people need to move, and what happens to the launch preparations they leave behind. A supply chain disruption redirects manufacturing: the model recalculates QC, validation, and regulatory updating tasks from the work itself. A regulatory agency requests an additional post-marketing study: the resource cost appears in the same governance meeting where the strategic response is decided, not three weeks later after someone reconciles five spreadsheets.

When leadership asks "can we accelerate this program?" the answer is no longer a judgment call made in a conference room. It is grounded in specifics: this milestone depends on two deliverables whose owners are overcommitted across three programs this month. These are the people who would need to move, these are the programs they would leave, and this is what happens to those timelines. The bottleneck is visible before it manifests as a delay. When a scenario plan triggers, the execution path is visible immediately because the work structure and the resource assignments already exist. The plan adapts because the work model adapted.

All of these examples are reactive: something changed, who moves? But the deeper opportunity is proactive. Even when nothing has gone wrong, are the right people on the highest-priority work right now? Are there specialists assigned to lower-priority programs whose skills would generate more value on the portfolio's top three priorities? Are there programs where the team composition no longer matches the phase the program has entered? When you can see the work, the assignments, and the skills at the task level across the entire portfolio, optimization becomes a continuous discipline rather than a crisis response. The organizations that get this right will not just respond faster when plans change. They will be running better-matched teams every day, in the steady state, before anything goes wrong.

PwC's 2026 pharmaceutical outlook describes the winning organizations of the next decade as those where "intelligent workflows reallocate resources dynamically and shrink decision cycles from months to minutes." ZS Associates reports 41% of leaders planning to automate R&D workflows with AI agents. PharmaSource argues that "operational excellence and planning discipline may matter more than cutting-edge technology." They are describing different facets of the same capability: execution that moves at the speed of decisions because the resource layer can keep up.

The Cost of Not Seeing

The science of drug development has evolved to embrace adaptation. Regulators have endorsed it. Boards expect it. Portfolio strategies depend on it. The resource layer has an opportunity to complete the picture, not by replacing what has worked for decades, but by adding the task-level precision that turns strategic agility into operational reality.

Every protocol amendment that takes weeks to resource instead of hours is a week patients wait. Every cross-program contention that surfaces as a crisis rather than a planning decision is a delay that did not need to happen. Every scenario plan that cannot be executed because nobody knows who is actually available is a strategy that stops at the slide deck.

Organizations that pair portfolio-level estimation with task-level calculation will not be the ones with better strategies. They will be the ones who could act on their strategies when it mattered. Not because they planned better at the start. Because when the plan changed Monday morning, their people could move by Monday afternoon.

The question is not whether this precision is achievable. It is whether the cost of operating without it, compounding quietly across hundreds of programs and thousands of task assignments, remains acceptable in an era where the science itself has learned to adapt.

References

- FDA: "Use of Bayesian Methodology in Clinical Trials" (January 9, 2026). fda.gov

- EMA/ICH E20: Overview of comments on adaptive designs (February 16, 2026). ema.europa.eu

- World Pharma Today: "Smarter Resource Planning for Complex Pharma Development Portfolios" (December 26, 2025). worldpharmatoday.com

- PwC: "Future of Pharma: Breakthroughs at Scale" (2026). pwc.com

- ZS Associates: "Pharma Industry Outlook 2026." zs.com

- PharmaSource: "What to Expect in Pharma Manufacturing in 2026." pharmasource.global

Further Reading

- Headcount Is Not Capability. On why adding people does not solve structural dysfunction.

- People Are Not Your Greatest Asset. On how the allocation mechanism breaks down at each step.

- Dark Matter in Drug Development. On cross-program dependencies that no project plan captures.

About Me

I'm Andy, pharmacist, MIT engineer, 25 years in life sciences operations. I started Unipr because I watched too many medicines get delayed by operational barriers, not science failures. We support 100+ pipeline programs at top pharma and biotech across 30+ countries.

This article reflects work we do every day with organizations navigating the shift from portfolio-level parametric estimation to task-level resource calculation. If you are exploring named allocation, adaptive resourcing, or cross-program contention visibility, I would welcome the conversation. Learn more at unipr.com.

Compliance

Unipr is built on trust, privacy, and enterprise-grade compliance. We never train our models on your data.

Start Building Today

Log in or create a free account to scope, build, map, compare, and enrich your projects with Planner.